Hadoop Cluster, WIkipedia, and Six Degrees of Separation

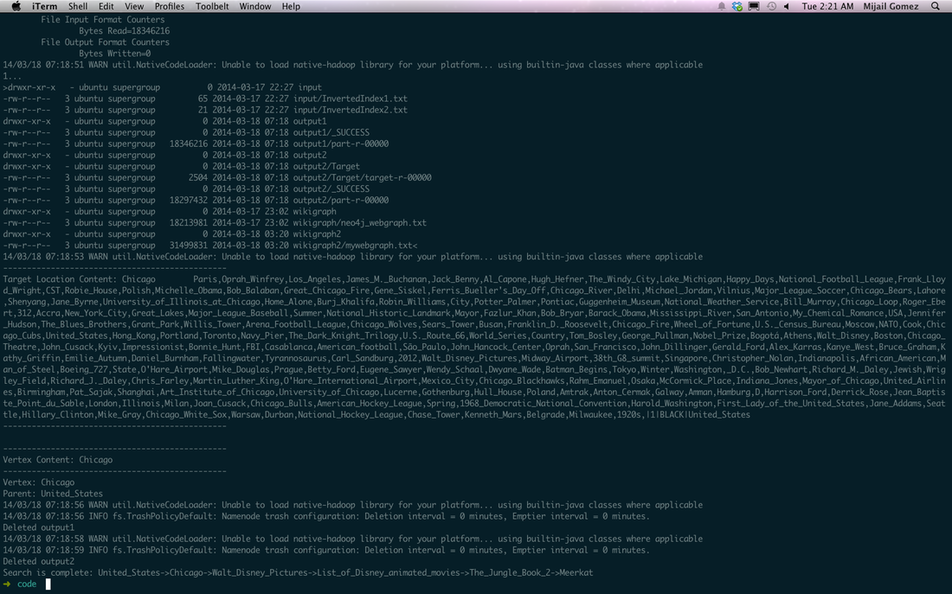

For our second Cloud Computing programming assignment in Spring 2014, ECE graduate student Mijail Gomez and I successfully created a system using Apache's Hadoop running on AWS EC2 Instances, Python, and PostgreSQL to find the shortest distance between two Wikipedia articles within a 6GB Wikipedia data dump. Thanks to Hadoop's inherent parallelism, searching breadth-first throughout a Wikipedia data dump to find the connection between two articles does not take ages to compute. Here's an image of the output: